It was not until the last minute before Joe Biden’s inauguration that QAnon followers realised that the expected military takeover or even an order of martial law from Donald Trump was not going to happen. In social media platforms popular with conservatives and conspiracy theorists like Gab and MeWe, some expressed frustration, while others continue to promote conspiracy theories and the belief that Trump will come back to finish his work.

Interviewed by Reuters following the inauguration, Jared Holt, a researcher at the Atlantic Council, said: “ I have never previously seen disillusionment on this scale in the QAnon communities which I monitor.”

It is still too early to predict the future of QAnon without Donald Trump as US president and its banning by leading social media platforms. But some effects are starting to be seen.

Thus far, 60% of accounts related to the movement have disappeared from Twitter, according to a report issued by social media analysts Graphika. A rapid decrease was registered after the riots at US Capitol Building and after the platform suspended 70,000 accounts linked to QAnon in mid-January.

Inevitably, its followers found other resources. In an article published recently, The Verge revealed that a new platform called Clapper, based in Texas with just 15 employees, has become a new home for QAnon followers, having been downloaded half a million times in two weeks.

QAnon believers can also be found among yoga lovers on the web. Yoga influencers who promote miraculous cures and challenge social distancing and vaccines have become popular over the past months. This phenomenon has been described by researcher Marc-André Argentino as Pastel QAnon. The yoga platform Gaia has been invaded by them and by other conspiracy theorists similarly banned from other networks such as David Icke.

Clapper, the new home of QAnon followers

According to Graphika, 8,859 out of 13,856 highly connected accounts that were engaging with QAnon hashtags last spring are now inactive. The report explains why:

“While some of these accounts are likely to have been deactivated by the users themselves, or suspended in previous enforcement actions, we believe that the vast majority of these accounts were removed in the most recent round of Twitter suspensions announced on January 12th.”, says the report.

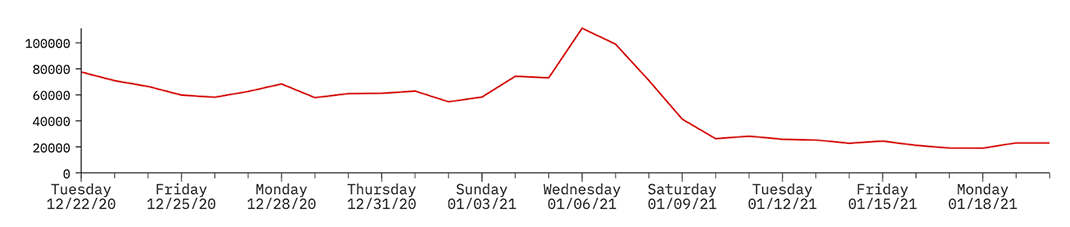

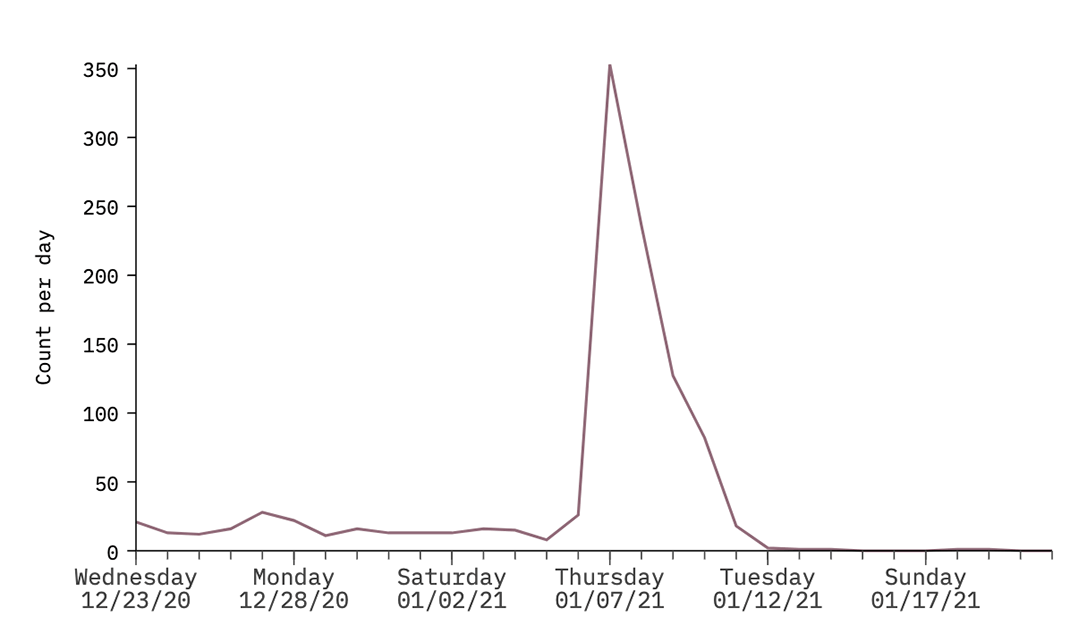

A timeline that plots the volume of tweets produced by QAnon users in this network shows a spike on January 6th—the day of the riots at the US Capitol Building in Washington, DC—before a rapid decrease in activity in the following days:

“There is a notable decline in the two days preceding the announcement from Twitter that it had removed more than 70,000 accounts sharing QAnon content on its platform. In that time, we saw the amount of content being produced by the most highly connected QAnon accounts on Twitter decrease by between 70-80%”.

The graph above indicates tweet volume overtime for the core QAnon community in Graphika’s network map; activity decreases significantly between January 6th and 10th.

The research found out that the latest wave of suspensions appears to have affected all of the online communities that comprise this QAnon Twitter network. The consultancy revealed that that in addition to the most dedicated adherents of the QAnon community, Trump support accounts that engaged with QAnon content and Japanese QAnon users were also greatly impacted. It uses as an example the fact that 45% of the group in Graphika’s QAnon network map that is based in Japan are now inactive. This compares with 62% of the core US QAnon conspiracy community.

“Preliminary analysis suggests that many of the accounts removed served as influential bridges between the different sub-groups that compose the larger QAnon community. Twitter announced that it had removed highly influential QAnon accounts, including those of Michael Flynn, former Trump attorney Sidney Powell, and former 8kun administrator Ron Watkins. The removed accounts appear to have been highly interconnected, suggesting they were well integrated in the online activity”.

The authors believe that given the prominent and “bridging” role played by these accounts in the overall network, the recent suspensions have had the knock-on effect of decreasing the number of connections between Twitter users. And that this has resulted in a less dense online network that comprises a collection of isolated splinter communities.

“Their removal is likely to have a significant impact on the social cohesion and future coordination efforts of the QAnon community on Twitter.”

Graphika’s report found that 4,997 accounts from this network remain active. Those with the highest number of in-map followers are typically Twitter accounts that produce a high volume of content centred around support for Donald Trump and tend to steer clear of using QAnon terminology or identifiers. The research identified that these accounts have been preoccupied in recent days with lamenting the end of the Trump presidency and sharing articles from right-wing news outlets speculating on his next political move.

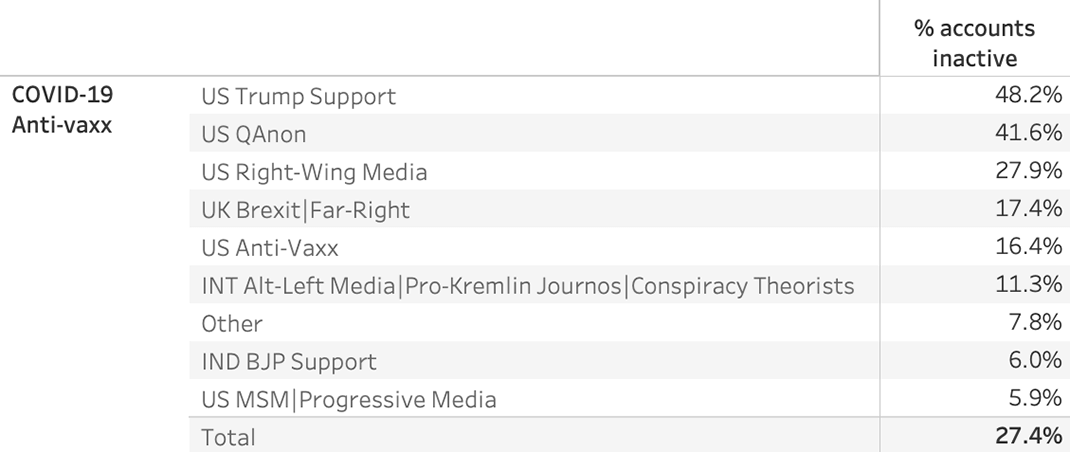

QAnon’s Role in the Covid-19 Anti-Vaxx Network

“While QAnon accounts made up just 17% of the overall network, the group was acting as a crucial bridge between accounts that supported Donald Trump and the core of the anti-vaxx movement in the US”.

Since this recent enforcement action from Twitter, close to 50% of the QAnon accounts participating in the Covid-19 anti-vax conversation have been deactivated, says the report. As a result, the suspended accounts are no longer able to act as an information vector for Covid-19 vaccine conspiracies, such as Bill Gates’s global depopulation’ or ‘Plandemic’ theories, to peripheral groups.

Graphika has conducted similar impact assessments at regular intervals following enforcement actions against QAnon by major social media platforms. In October of last year, following adjustments to platform policies from Facebook and YouTube the firm observed that previous takedowns carried out during the summer did not appear to have had significant consequences on the ability of the QAnon community to produce and consume conspiratorial content.

“Enforcement of these policy changes was patchy, particularly for non-English content. This analysis found that for the same core QAnon network as is mentioned above, between 85-95% of the accounts were still active in October after Twitter announced a ‘crackdown’ in July.”

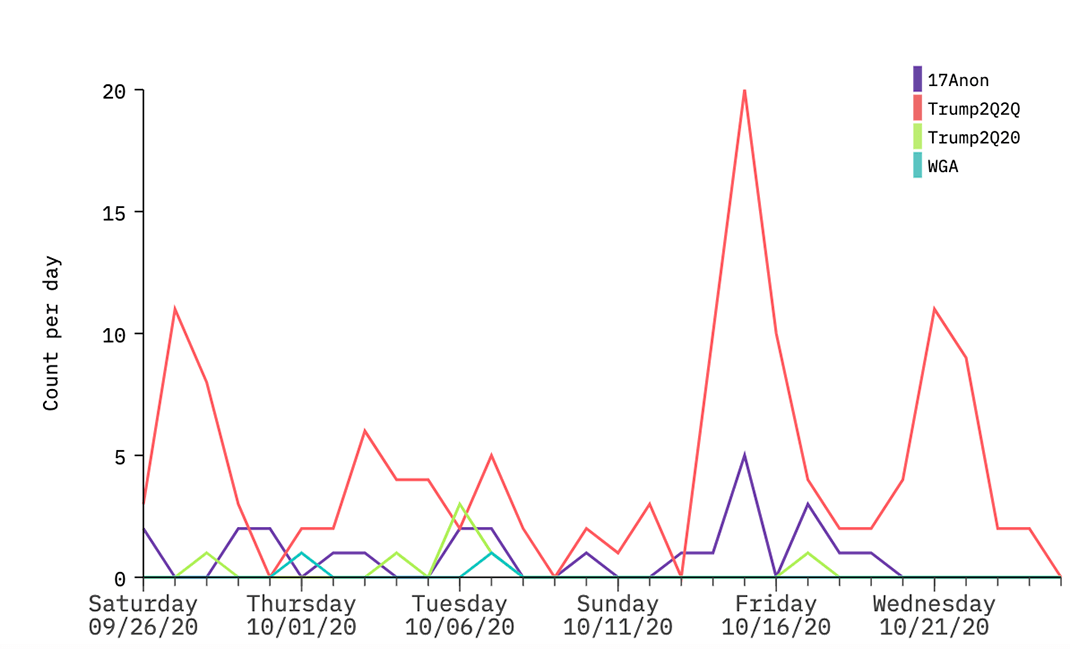

Graphika noted that many QAnon accounts exhibited a shift in behaviours, adapting their activity to avoid suspension.

“QAnon supporters typically shifted away from using their ‘trademark’ online signifiers, however, the extent of this response was dependent on the specifics of each platform. On Twitter, this included adapting known QAnon hashtags that are generally used in bios, usernames, and account descriptions, predominantly by trading numbers and letters (shown below). In terms of narratives, there has been a broad effort to soften QAnon rhetoric, for example by reframing raising awareness of ‘satanic child sex trafficking rings operating at the highest levels of US government’ into a focus on protecting the rights of children, which has a more mainstream audience”.

The consultancy states that platforms have typically been slow to respond to these behavioural shifts.

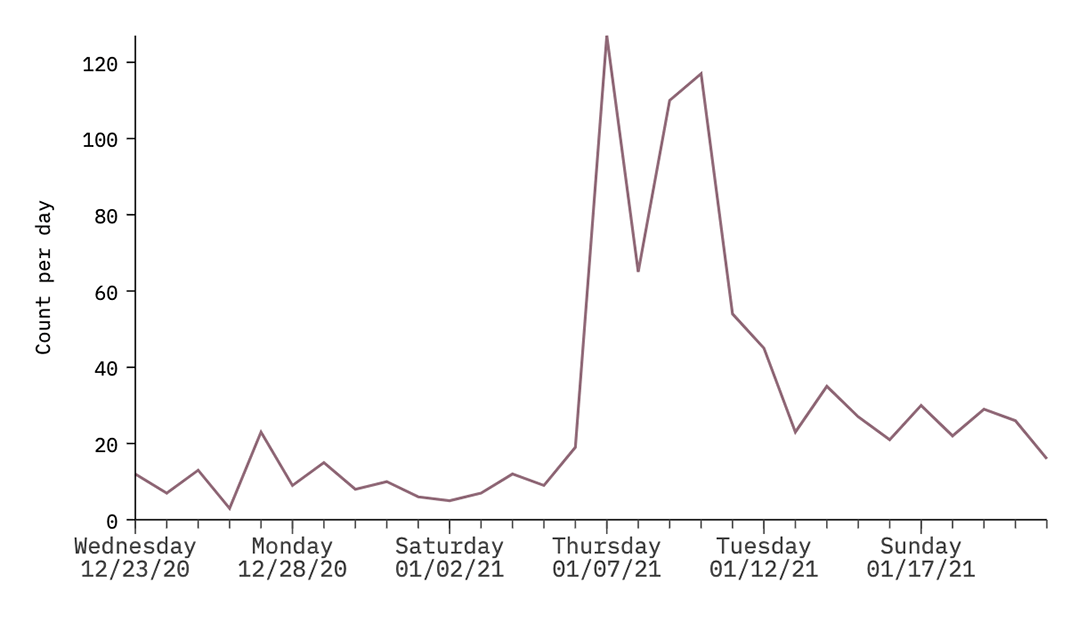

The survey also identified that many of the accounts that remain online have been advocating for the migration of the QAnon community to alt-tech platforms such as Parler, Gab, Clouthub, Telegram, and MeWe, with a sharp uptick in the sharing of the Parler and Gab domains by QAnon accounts on January 6th, the day of the Capitol Hill riots.

T

T

weets by US QAnon users containing links to parler.com (above) and gab.com (below); both indicate a rapid increase on January 6th.

Clapper, the TikTok clone

This movement is confirmed by analysing Clapper’s growth. It was launched last July as a “Free Speech Short Video” app, promising less moderation. The app is said to have been downloaded over half a million times, with a considerable amount of that growth coming in just the past two weeks.

The Verge tech news portal compared the “For You” Clapper’s page to One America News Network, exhibiting videos with people calling themselves “patriots,” but also plenty of anti-vax misinformation and videos calling out Democrats as “paedophiles.” And says that according to Clapper’s website, #trump2020 and other political hashtags are some of the most popular on the platform.

The story highlights a review on the Google Play Store, in which the user states that the app “has now taken the place of Parler in terms of [QAnon] and bigots taking it over as their own little echo chamber”.

In a press release issued last November, the owner, Clapper Media Group, announced the launch as “the first independent free speech video platform designed to allow internet users to voice their opinion”.

“Clapper, formerly NewsClapper, is an unbiased, free speech short video social platform that has emerged due to the need for a censorship-free social media platform where all opinions matter. The platform has been launched in line with the company’s goal of encouraging freedom of speech by allowing people to freely express their personal opinions, political views or share local video news without limitations.”

It claimed that “unfortunately, social media’s true essence as a means of expressing one’s opinion seems to have been defeated with the major platforms censoring and banning unwanted content. Consequently, it has become increasingly difficult for people to exercise their right to freedom of expression, which is where Clapper is looking to change the narrative”.

And to present itself as “designed to champion the trend and movement of “Free speech” while breaking the censorship power of big social media and streaming platforms”.

Clapper CEO and co-founder Edison Chen admitted to The Verge that the app’s recent growth has come from QAnon believers and right-wing political accounts looking for less censorship or banned from other social media platforms. When asked if Clapper allows QAnon content on its platform, Chen first told The Verge that moderation largely relies on reports from its users, but content that could incite violence is prohibited. Later, he informed that Clapper had “identified” several QAnon-related users and was conducting an investigation into whether they violated the app’s community standards.

And one doesn’t need to be a member to access QAnon-related content. In a Google search looking for “Clapper QAnon”, a link for videos associated to the movement appears on the 1st page. This was helping to disseminate claims that Trump was battling against a dangerous “deep state” within the US government.

QAnon and Yoga, a dangerous combination

In its blog post on January 12, Twitter announced the decision of “permanently suspending thousands of accounts that were primarily dedicated to sharing QAnon content”.

The word primarily means that not all accounts promoting QAnon would be targeted, including those of individuals or groups that disseminate their beliefs, such as yoga’s. It’s not a new trend. Since the beginning of the pandemic, researchers noted that QAnon was finding its way into the world of yoga.

An early warning was given by Seane Corn, a prominent yoga instructor in the US and social justice activist. In an interview to Yahoo News, she revealed that she started to receive strange messages from friends and fellow teachers in March:

“What they were trying to help me to understand was … not to trust the Government, that there was this deep-state conspiracy related to COVID where, via Bill Gates, they’re going to be microchipping us through the use of mandated vaccines,” Corn told Yahoo News. They used terms like “great awakening” and “red pill.”

Researcher Marc-André Argentino, who has been studying, this phenomenon, has put a name to this – Pastel QAnon. In an article on Nieman Lab published in October 2020, he wrote:

“One way Q supporters adapted was through lighter forms of propaganda — something I call Pastel QAnon. As a way to circumvent the initial Facebook sanctions, women who believe in the QAnon conspiracies used warm and colourful images to spread QAnon theories through health and wellness communities and by infiltrating legitimate charitable campaigns against child trafficking.

Cecile Guerin, a researcher on online disinformation and yoga teacher herself, wrote in a Wired UK article that in the early days of lockdown, she started to find posts about how juices, miracle cures and turmeric could boost immunity and ward off the virus. As the pandemic intensified, disinformation became darker, from anti-vaxx content and Covid denialism to calls to ‘question established truths’ and wilder conspiracy theories.

In her opinion, some yoga influencers and new age spirituality leaders saw the pandemic as an opportunity and began providing a platform to conspiracy theories, citing the example of Guru Jagat, a Kundalini yoga teacher with 67,000 Instagram followers and 21,000 YouTube subscribers, who invited QAnon conspiracy theorist Kerry Cassidy for an hour-long interview on YouTube.

Guerin says that it’s hard to tell just how much conspiracy theories have infiltrated the wellness and yoga space:

” Researchers have tried to document the recent revival of ‘conspirituality’ – the intersection of yoga, spirituality and holistic health with conspiracy theories. The Conspirituality podcast, co-founded by cult survivor and yoga teacher Matthew Remski, lists figures in the wellness industry who have shared conspiracy theories and aims at exposing ‘faux-progressive wellness utopianism.’

She believes the trend stretches beyond influencers, as conspiracy content shared by yoga and wellness enthusiasts, also appears in various forms in spaces that are difficult for researchers to access, including private Facebook groups, or in the form of short-lived content such as Instagram stories.

Guerin also associates the situation with the crisis suffered by the yoga industry – a survey in the US showed a 23% increase in yoga studios closures in 2020 s – depriving thousands of teachers of income and encouraging many to capitalise on content that generates traffic and cash.

“For modern-day influencers, the motivations for sharing QAnon content vary from cynical opportunism to ideology. Yoga entrepreneurs have become adept at seizing the opportunities the pandemic has presented and in preying on the vulnerabilities of their audiences.”

She reminds us that although the yoga and wellness online communities are largely female, educated and middle-class – seemingly unusual candidates for the spread of conspiracy theories, research has shown that women are more likely to believe anti-vaxx disinformation, with female-dominated yoga and wellness groups a gateway to these beliefs.

In a recent post before Biden’s inauguration, she attacks social distancing and relates illnesses with isolation, suggesting the something serious would happen in a few days, something big would happen, in a reference to the QAnon’s “great awakening”.

Gaia, much more than “downward facing dog pose”

This trend was also noted by the online newspaper Daily Dot, where Krystal Tini can also be found. Last December, it featured the platform Gaia, which offers yoga videos through a subscription model. According to the Daily Dot’s article, nearly 100,000 new paying members joined the platform in the first nine months of 2020, bringing its audience up to nearly 700,000 subscribers in 185 countries.

The offers go beyond yoga classes, with an extensive library of more than 8,000 videos that include everything from aliens to anti-vaxxing.

Gaia did not respond to the Daily Dot’s request about how users are selected for verification, nor broader questions about how videos are chosen or commissioned for the site. The site’s Terms of Use and Privacy offer the only insight into any sort of moderation policy, noting that, “we see membership to our network and our community as a privilege, not a right,” which means they will remove “disrespectful” comments and reserve the right to revoke memberships.

What will happen now?

This difficult question was answered by researcher Mark-André Argentino in an article on The Conversation published right after the conflicts in Washington:

In his opinion, we have now long passed the point of simply asking: how can people believe in QAnon when so many of its claims fly in the face of facts, as the attack on the Capitol showed the real dangers of QAnon adherents.

“Their militant and anti-establishment ideology — rooted in a quasi-apocalyptic desire to destroy the existing, corrupt world and usher in a promised golden age — was on full display for the whole world to see”.

And they are here to stay:

“QAnon, along with other far-right actors, will likely continue to come together to achieve their insurrection goals. This could lead to a continuation of QAnon-inspired violence as the movement’s ideology continues to grow in American culture”.

T

T